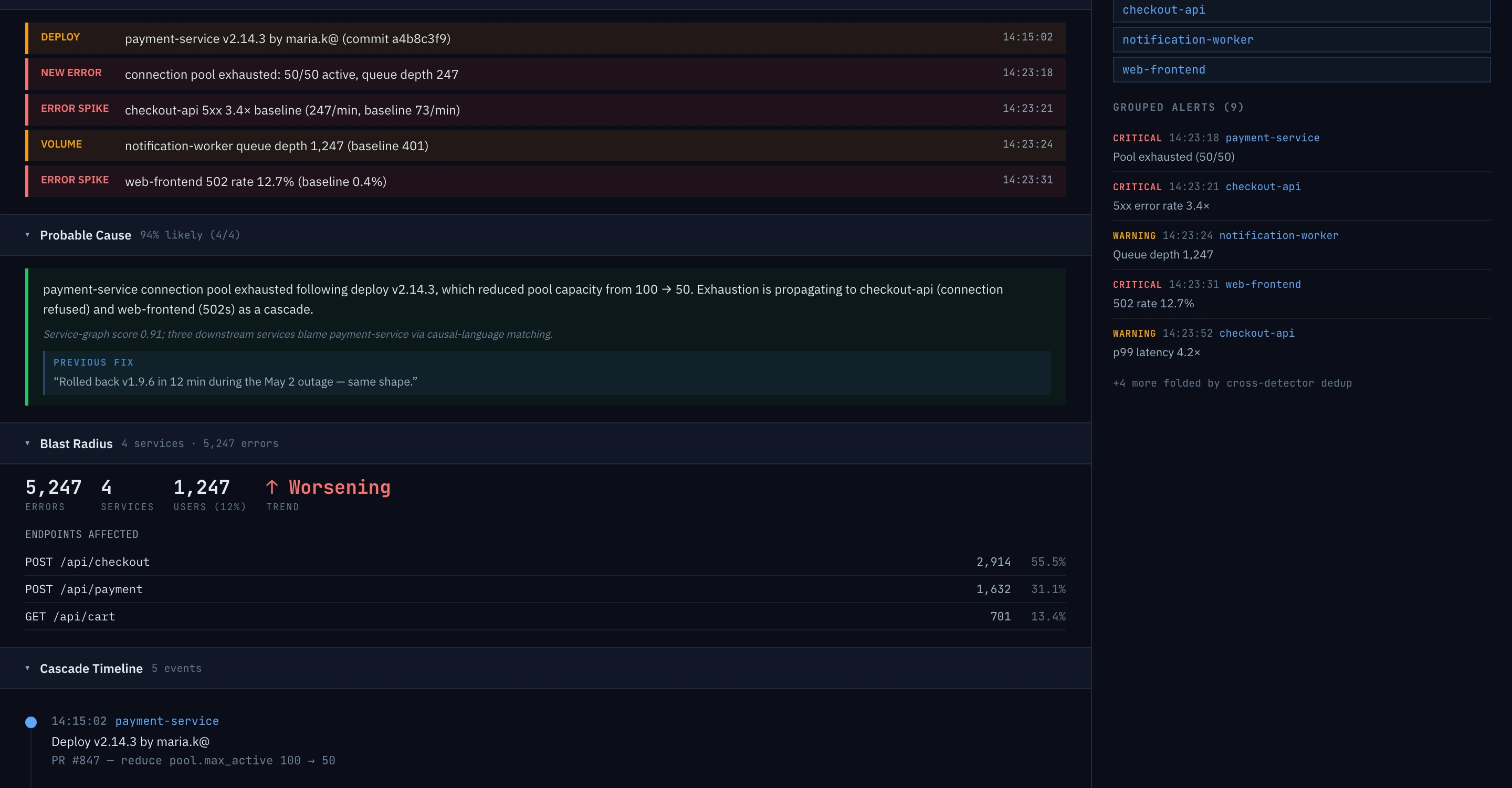

The root cause. In English.

Other log tools store your data and wait for you to ask the right question. Epok watches your logs and tells you when something is wrong — what broke, why, and which customers are affected.

Every alert tells you "3 Enterprise · 12 Pro · 47 Free affected" — so on-call decides "wake up now" vs. "wait till morning" from the notification. Every AI claim cited to the log line that produced it.

Replaces the detection + alerting layer of Datadog / Splunk / CloudWatch. $500/mo flat, 14 days free, no card.

Synthetic data, real detectors. Try it →

Statistical + nine domain rule packs · every tier

Loghub HDFS replay, 2M lines · reproducible

Ingest to render · p99 124 ms · SLO 500 ms

The six that catch most incidents. Every detector runs on every tier — starting with the 14-day trial.

Statistical detection ships on every tier, including the trial. AI root cause analysis included on every tier — capped on Team, larger budget on Growth.

New Error Detection

Catches errors that have never appeared in your 7‑day baseline.

Silence Detection

Catches services that stop logging when they normally log every N seconds. The most dangerous failure mode: no errors, just absence.

Volume Anomaly

Detects spikes, drops, and flatlines in log volume vs daily and weekly baselines per service.

Pattern Clustering

Groups errors with similar templates so many variants of the same problem cluster into one alert.

Kubernetes Detection

70+ rules for OOMKilled, CrashLoopBackOff, ImagePullBackOff, FailedScheduling, and more.

Dependency Detection

Upstream service failures, circuit breaker trips, retry exhaustion, and cascading failures between services.

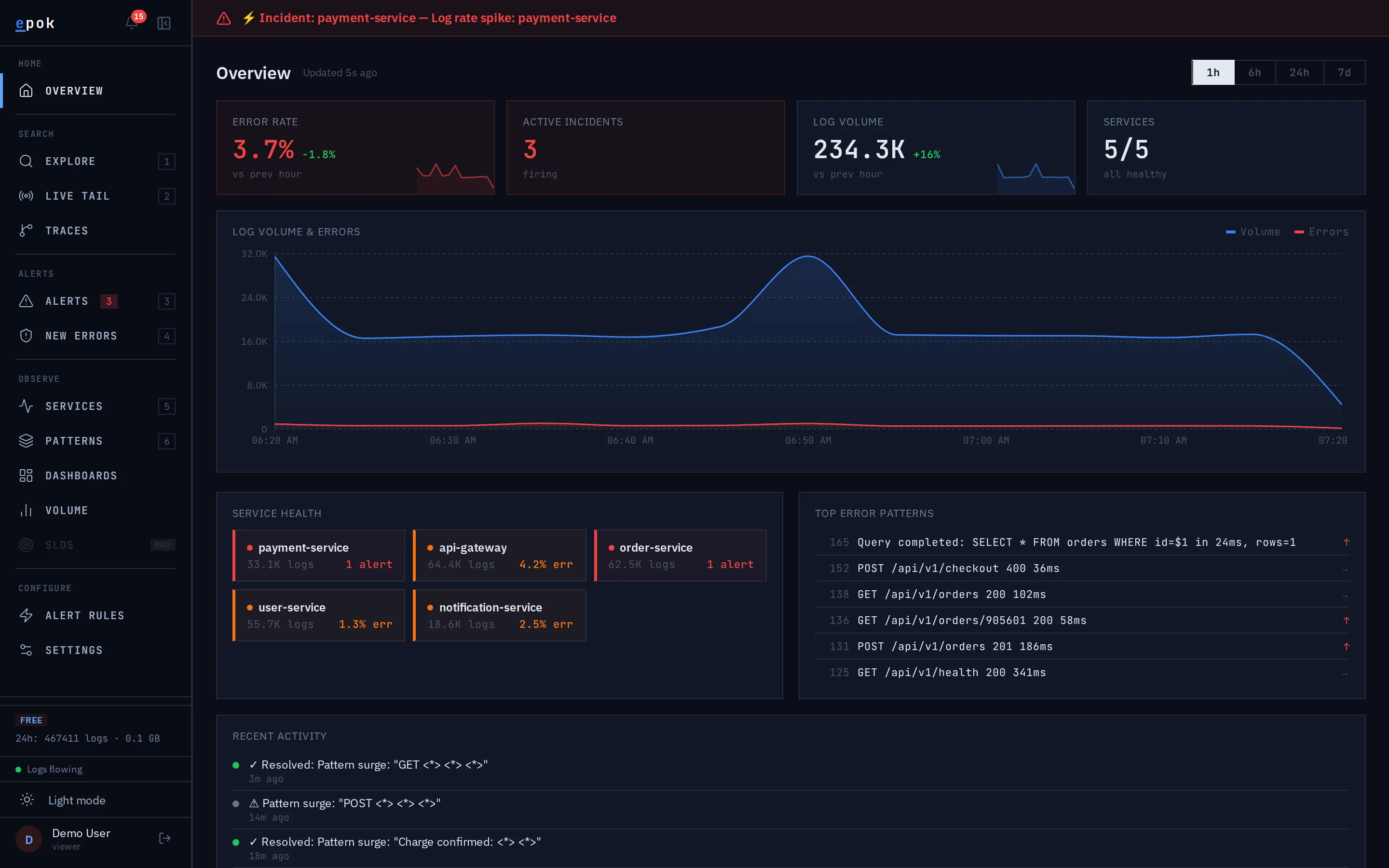

See it on data. No signup.

A 5-service example app generates a continuous synthetic log stream into a public Epok tenant. Anomaly detection, root cause analysis, and pattern clustering all run on it live — what you see is Epok working on real-shape data, not a marketing video.

The demo runs the same product as the trial. Same UI, same detectors.

Detection is table stakes. What happens next is the product.

Datadog and Splunk catch signals too. Epok closes the loop — customer impact, plain-English search, evidence-cited postmortems, and your own runbooks matched into every alert.

When you'd actually use it.

Two moments that matter most — the deploy check and the incident response.